(Left) Raw video by Piyapong Suwannakul and (Right) Processed video using VI-Lab tools. See video HERE

Aim

Our oceans have been explored for hundreds of years and these activities are becoming increasingly important because of the need to manage and conserve mineral and biological resources effectively, as well as to better understand planetary-scale processes including tectonics and marine hazards. Exploration and analysis are however always limited by the number of diving experts, technologies, and in particular, costs. Advanced imaging methods now support a new paradigm of remote discovery where onshore experts with specific knowledge, such as geologists, archaeologists and biologists, are able to remotely model and explore underwater scenes.

Underwater environment represents the combination of several challenges. Water is a dynamic medium and suspended particles move. Light scatter causes blur and halo effects, whilst light absorption leads to colour distortion and reduced contrast. The model of underwater imagery should thus comprise temporally- and spatially-variant distortion, uneven intensity bias, multiplicative noise, and additive noise. This project aims to exploit underwater image priors to perform 3D mapping process can be done directly from the raw underwater sequences.

Methods

- UW-GS: Distractor-Aware 3D Gaussian Splatting for Enhanced Underwater Scene Reconstruction (WACV2025)

[Project] [PDF

] [ Code] [Dataset]

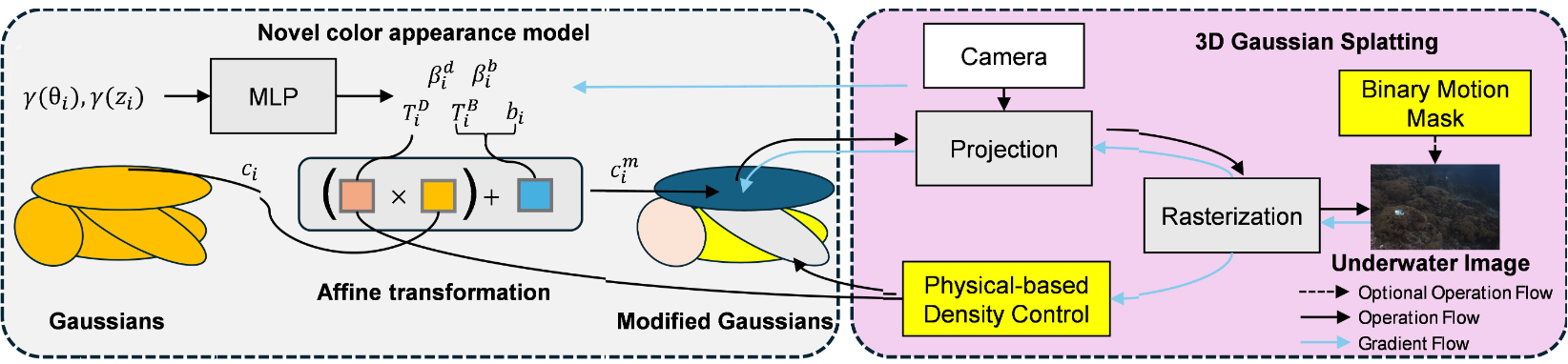

Our Gaussian Splatting-based method introduces a color appearance model for distance-dependent color variation, employs a new physics-based density control strategy to enhance clarity for distant objects, and uses a binary motion mask to handle dynamic content. The method is optimised with a well-designed loss function supporting scattering media and strengthened by pseudo-depth maps.

- SWAGSplatting: Semantic-guided Water-scene Augmented Gaussian Splatting

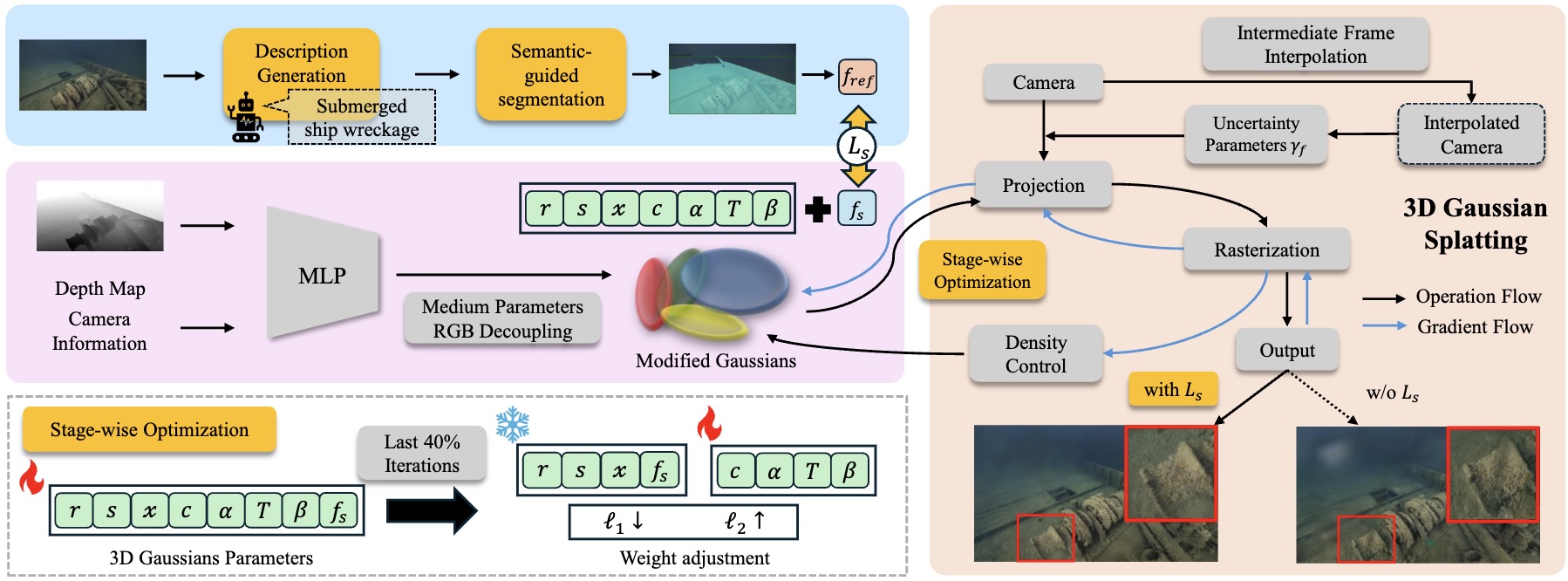

We present a semantic-guided 3D Gaussian Splatting framework for deep-sea scene reconstruction, where each Gaussian embeds CLIP-derived features to enforce semantic and structural consistency. A dedicated semantic loss and stage-wise training strategy further enhance stability and reconstruction fidelity.

- Prune Wisely, Reconstruct Sharply: Compact 3D Gaussian Splatting via Adaptive Pruning and Difference-of-Gaussian Primitives (CVPR2026)

[ PDF] [Code]

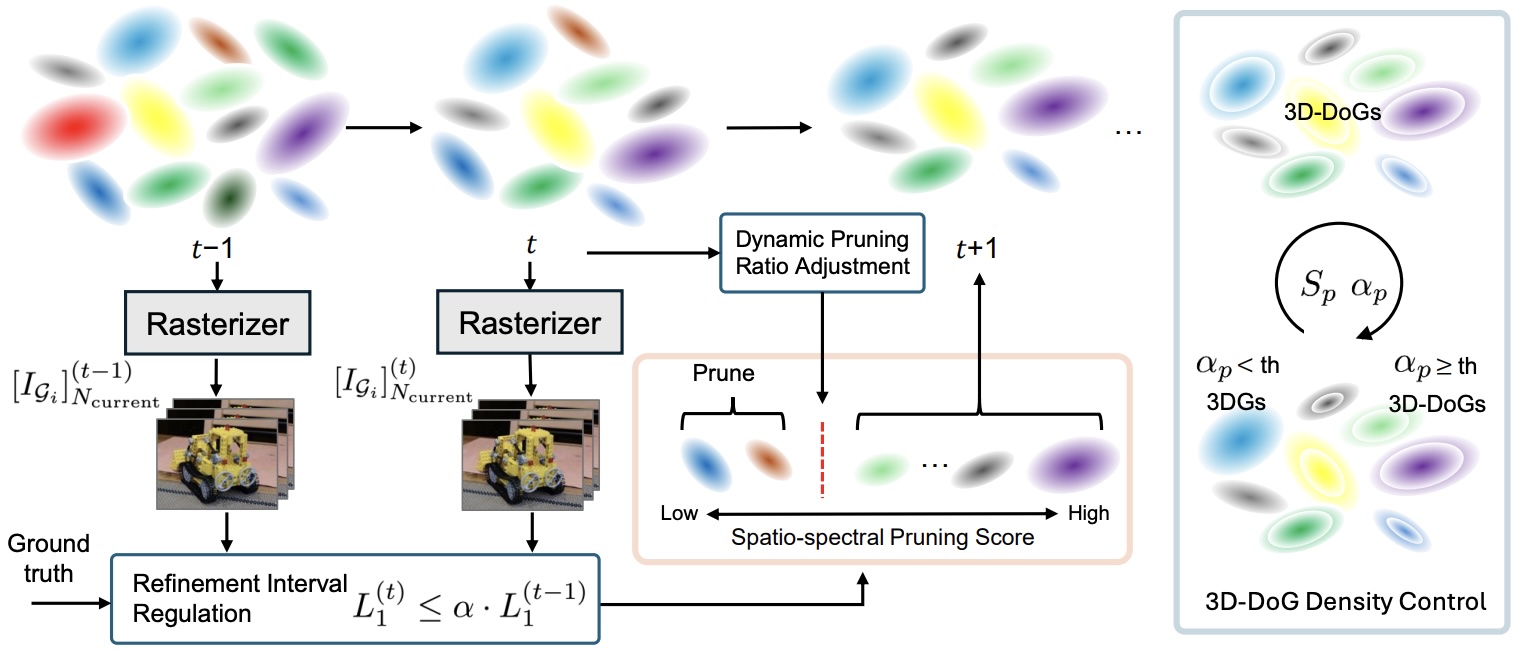

We propose an efficient reconstruction-aware pruning strategy that adaptively schedules pruning and refinement based on reconstruction quality, reducing model size while improving rendering performance. In addition, we introduce a 3D Difference-of-Gaussians primitive that jointly models positive and negative densities within a single primitive, enhancing Gaussian expressiveness under compact representations.

- RUSplatting: Robust 3D Gaussian Splatting for Sparse-View Underwater Scene Reconstruction (BMVC2025)

[Project] [PDF]

[ Code] [Dataset]

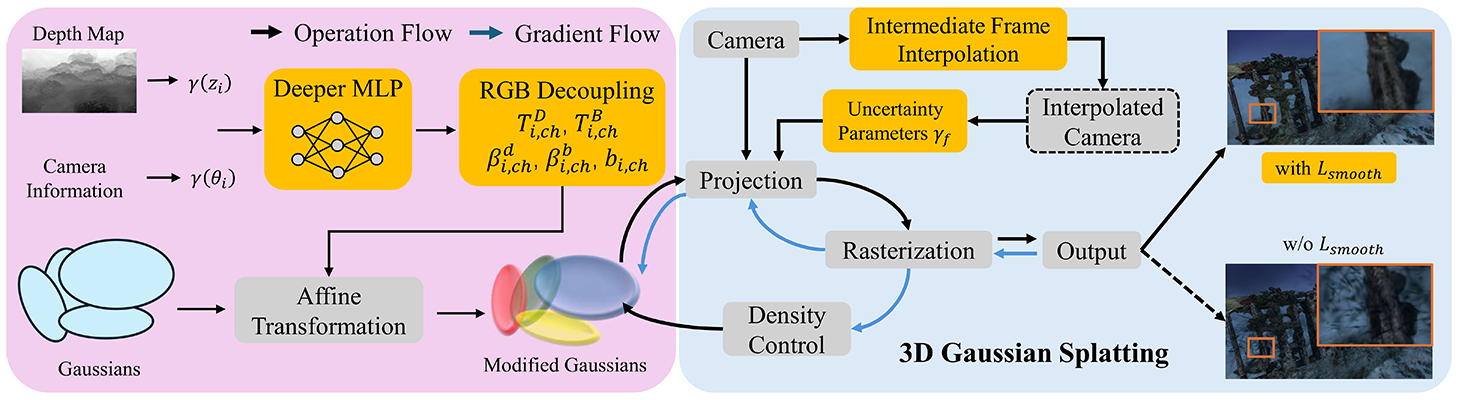

Our enhanced Gaussian Splatting framework improves both visual quality and geometric accuracy in underwater rendering. We employ physics-guided decoupled RGB learning for accurate colour restoration, a frame interpolation strategy with adaptive weighting to address sparse views, and a new loss function that reduces noise while preserving edges, crucial for deep-sea content.

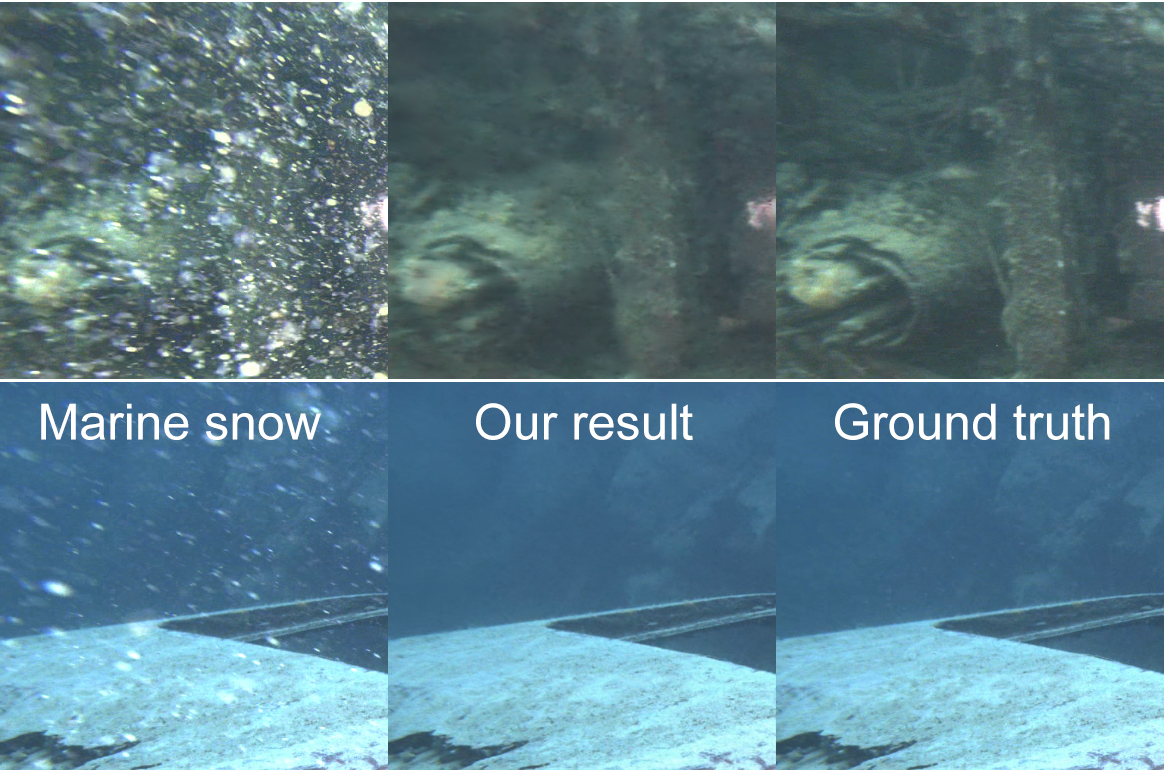

- Marine Snow Removal Using Internally Generated Pseudo Ground Truth (EUSIPCO2025)

[ PDF]

The framework introduces a novel method for generating paired datasets from raw underwater videos, producing snowy and snow-free pairs that enable supervised training for video enhancement.

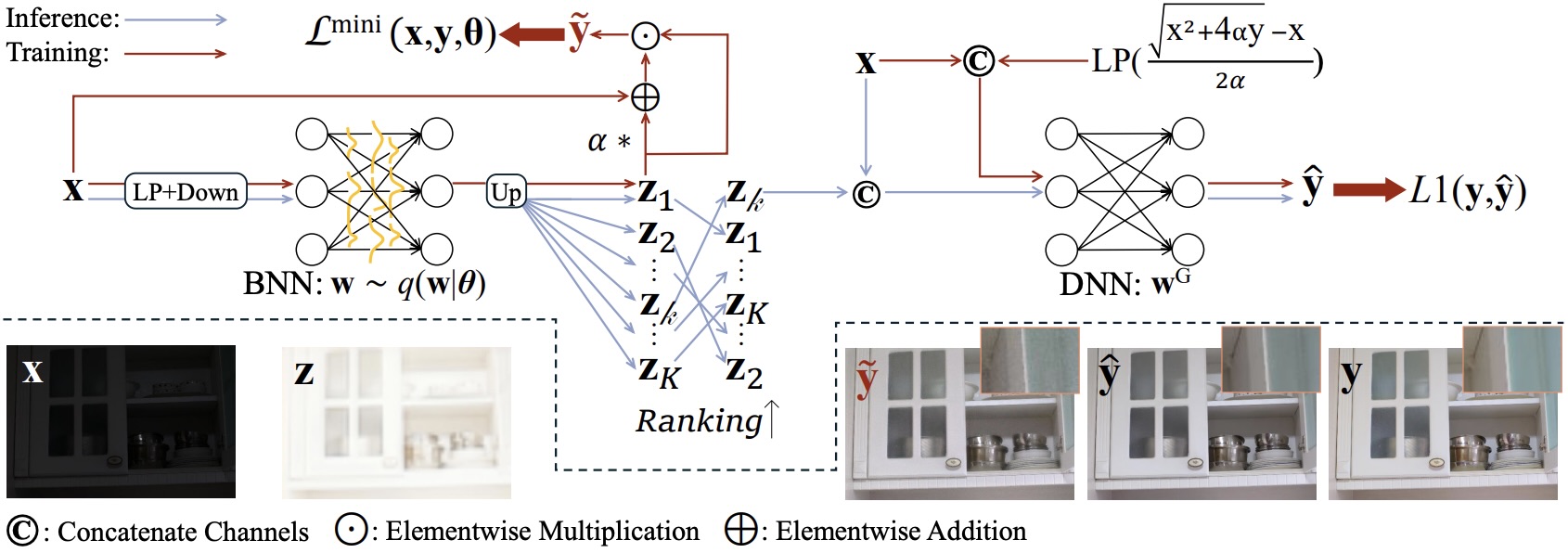

- Bayesian Neural Networks for One-to-Many Mapping in Image Enhancement (AAAI2026)

We propose a Bayesian Enhancement Model (BEM) that leverages Bayesian Neural Networks (BNNs) to model data uncertainty and generate diverse outputs. For efficient inference, we adopt a BNN–DNN framework, where a BNN captures the one-to-many mapping in a low-dimensional space, followed by a deterministic network that refines fine-grained details.

Research team

Core

- N. Anantrasirichai: Lead academic

- Guoxi Huang (Edward): Postdoctoral researcher,

[ Paper1] [ Paper2] [Paper3] - Alexandra Malyugina: Postdoctoral researcher, [Paper]

- Haoran Wang: PhD student, [Paper1, UW-GS Project Page] [Paper2]

- Yini Li: PhD student,

[ Paper]

Undergrad/Postgrad projects

- [Best AI Project Prize] Zhuodong Jiang (2024/2025), Underwater 3D Gaussian Splatting With Frame Interpolation, Colour Channel Decoupling and Adaptive Bilateral Filtering [Thesis] [Paper] [Submerged3D Dataset]

- Luca Gough (2023/2024), 3D Representation of Underwater Scenes using Neural Radiance Fields [Thesis] [Paper

] - George Atkinson (2023/2024), Generative Deep Learning for Temporally Consistent Underwater Video Enhancement [Thesis]

Downloads

Publications

- Prune Wisely, Reconstruct Sharply: Compact 3D Gaussian Splatting via Adaptive Pruning and Difference-of-Gaussian Primitives. H Wang, G Huang, F Zhang, D Bull, and N Anantrasirichai, IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2026

[ PDF] - Bayesian Neural Networks for One-to-Many Mapping in Image Enhancement. G Huang, Z Qi, RR Lin, Q Yang, D Bull, N Anantrasirichai, AAAI Conference on Artificial Intelligence. 2026 [PDF] [CODE]

- RUSplatting: Robust 3D Gaussian Splatting for Sparse-View Underwater Scene Reconstruction. Z Jiang, H Wang, G Huang, B Seymour and N Anantrasirichai, 36th British Machine Vision Conference. 2025

[ PDF] [ CODE] [Dataset] - UW-GS: Distractor-Aware 3D Gaussian Splatting for Enhanced Underwater Scene Reconstruction. H Wang, N Anantrasirichai, F Zhang, and D Bull, IEEE/CVF Winter Conference on Applications of Computer Vision. 2025

[ PDF] [ CODE] [UW-GS Project Page] [Dataset] - AquaNeRF: Neural Radiance Fields in Underwater Media with Distractor Removal. L Gough, A Azzarelli, F Zhang, and N Anantrasirichai, IEEE International Symposium on Circuits and Systems. 2025

[ PDF] - Marine Snow Removal Using Internally Generated Pseudo Ground Truth. A Malyugina, G Huang, E Ruiz, B Leslie, N Anantrasirichai. 33rd European Signal Processing Conference, 2025

[ PDF] - [Shortlist Best Student Paper Award] Zero-TIG: Temporal Consistency-Aware Zero-Shot Illumination-Guided Low-light Video Enhancement. Y Li and N Anantrasirichai. 33rd European Signal Processing Conference, 2025

[ PDF] - BVI-Mamba: video enhancement using a visual state-space model for low-light and underwater environments. G Huang, R Lin, Y Li, D Bull, N Anantrasirichai. Machine Learning from Challenging Data, 2025

[ PDF] [CODE]

White papers

- Visual enhancement and 3D representation for underwater scenes: a review, G Huang, H Wang, B Seymour, E Kovacs, J Ellerbrock, D Blackham, N Anantrasirichai, 2025 [PDF]

- From Restoration to Reconstruction: Rethinking 3D Gaussian Splatting for Underwater Scenes, G Huang, H Wang, Z Qi, W Lu, D Bull, N Anantrasirichai, 2025 [PDF]

Datasets

Related research

Related publications from VI-Lab

- A unified framework for contextual lighting, colorization and denoising for UHD sequences . N Anantrasirichai and D R Bull. IEEE ICIP, 2021

- Artificial intelligence in the creative industries: A review. N Anantrasirichai and D R Bull, Artif Intell Rev 55, 2022

- ST-MFNet Mini: Knowledge distillation-driven frame interpolation. C Morris, D Danier, F Zhang, N Anantrasirichai, D R Bull. IEEE International Conference on Image Processing. 2023